AI Visual Inspection for 3D Printing: Automating Post-Print Quality Control

Does your 3D printer always produce perfect parts?

If you’re being honest, the answer is “no.” Parts produced via FDM harbor countless defects—layer shifts, stringing, warping, delamination. The problem isn’t the defects themselves; it’s that the human eye cannot reliably detect them. Lighting angles, fatigue, and the subjective bias of “that’s probably good enough” turn visual inspection into nothing more than a mood-dependent gamble masquerading as quality control.

This article explores how AI visual inspection for 3D printing can fully automate post-print quality control. A critical distinction: this article addresses post-process visual inspection—not in-process spaghetti detection. While tools like Obico and Bambu Lab’s built-in cameras “stop failures during printing,” the technology discussed here determines “whether a finished part meets quality standards.”

- The Limits of Visual Inspection—Why Human Eyes Misjudge Print Quality

- Computer Vision × 3D Print Defect Detection: 2024–2025 Research Landscape

- Practical Guide: Building a $150 Raspberry Pi Inspection Station

- Defect Classification Accuracy: Detection Performance Across 7 Major Categories

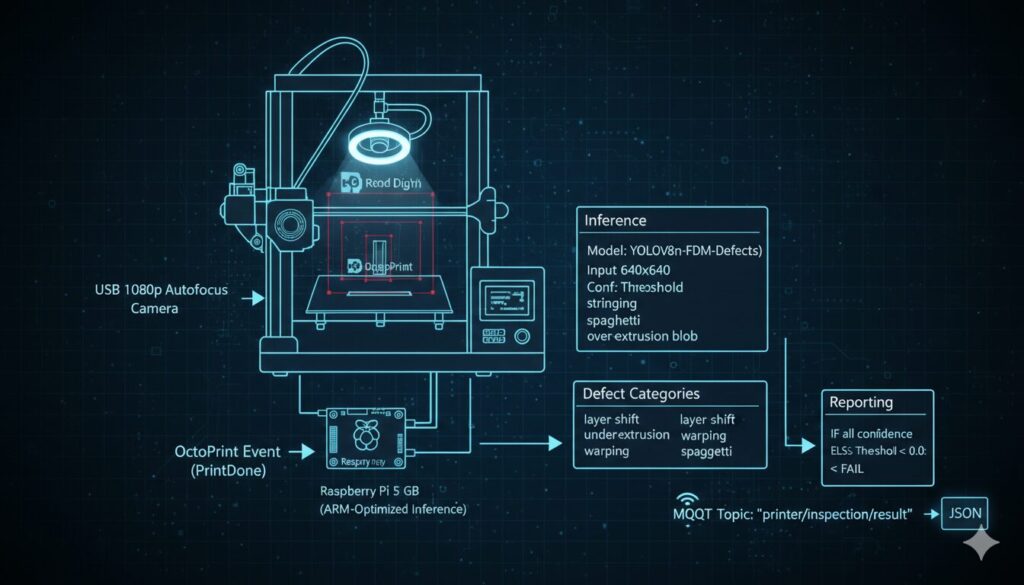

- Print Farm Integration: OctoPrint × Inspection Agent Architecture

- Conclusion: Automated Quality Control Transforms 3D Printing into Manufacturing

The Limits of Visual Inspection—Why Human Eyes Misjudge Print Quality

Subjectivity as a Structural Flaw

Inspectors on a quality control line visually examine hundreds to thousands of parts per day. The problem isn’t “missed defects” but “inconsistent judgment criteria.”

3D print defects are inherently subtle. A 0.3mm layer shift may or may not be visible depending on lighting. Acceptable stringing levels vary between inspectors, and pass/fail decisions can differ between morning and evening shifts. Research shows that visual inspection reproducibility—the probability of the same inspector making the same judgment on the same part—ranges from only 70–85%.

The Physical Limits of the Human Eye

The human eye’s spatial resolution is approximately 0.1mm under optimal conditions. However, many defects affecting FDM print quality are smaller or require specific conditions to detect.

| Defect Type | Typical Size | Visual Detection Difficulty |

|---|---|---|

| Layer Shift | 0.1–1.0mm | Difficult below 0.3mm |

| Under-Extrusion | 0.05–0.3mm gaps | Highly dependent on lighting |

| Delamination | Sub-surface internal defect | Nearly impossible visually |

| Stringing | 0.1–0.5mm diameter threads | Depends on background contrast |

| Warping | 0.1–2.0mm deformation | Difficult from top-down view |

Particularly critical is delamination (inter-layer separation). This is an internal adhesion failure that may look normal on the surface but fails catastrophically under load. No inspector can reliably detect this through visual observation alone.

For a single hobbyist printer, visual inspection is “tedious but manageable.” However, as home-based “dark factories” emerge with 5, 10, or even 50 printers, visual inspection becomes a fundamental bottleneck to scaling production.

This is where the inevitable case for automated inspection through computer vision emerges.

Computer Vision × 3D Print Defect Detection: 2024–2025 Research Landscape

Evolution of YOLO Architecture for 3D Print Applications

The YOLO (You Only Look Once) series, synonymous with object detection, has rapidly delivered results in 3D print defect detection. Here is a summary of key research findings published between 2024 and 2025.

| Model | mAP@0.5 | mAP@0.5:0.95 | Precision | FPS | Source |

|---|---|---|---|---|---|

| YOLOv11s | 83.08% | 53.61% | — | — | Springer, J. Intelligent Manufacturing (2024) |

| YOLOv11m | — | — | 91.28% | — | Springer, J. Intelligent Manufacturing (2024) |

| Improved YOLOv8 | 97.5% | — | — | — | IJAMT (2025), GFLOPs reduced by 32.9% |

| ViT (Vision Transformer) | — | — | 95.98% (accuracy) | — | Nature npj Advanced Manufacturing (2025) |

Balancing Accuracy and Efficiency

Two points deserve attention here. First, the improved YOLOv8 achieves a remarkable 97.5% mAP50 while reducing GFLOPs by 32.9%. This demonstrates the ideal evolutionary direction: “more accurate, yet lighter.”

Second, Vision Transformer (ViT) achieves 95.98% accuracy through a fundamentally different approach from CNN-based YOLO. Published in Nature npj Advanced Manufacturing (2025), this study showed that with a 900-sample dataset, ViT achieved 95.98% versus 95.90% for the CNN baseline. However, Transformer superiority becomes more pronounced with smaller datasets (180–300 samples). This demonstrates that Transformer architectures are effective for detecting “fine-grained textural anomalies” in 3D prints.

Dataset Maturation

The accuracy of AI visual inspection for 3D printing is underpinned by high-quality datasets. This field reached a major turning point in 2025.

Previous major datasets were relatively small-scale: Kaggle’s “FDM 3D Printing Defect Dataset” (by wengmhu, 5 defect types) and Roboflow’s “HCMUT 3D Printing Defects” (5,900 images, 3 categories: spaghetti/stringing/zits).

However, the 3D-ADAM dataset published in the journal Additive Manufacturing in early 2025 changed the game entirely. With 14,120 CT scans from 217 parts across 12 defect categories, it delivers a scale and granularity previously unavailable in public datasets.

The significance of 3D-ADAM extends beyond volume. Its 12 fine-grained defect categories make it practically feasible for the first time to train models that can distinguish between similar defects like “stringing” and “blobs.”

The Technical Disconnect Between “In-Process” and “Post-Process”

Here lies the core argument of this article. Existing AI inspection solutions are almost entirely specialized for in-process anomaly detection.

- Obico (formerly Spaghetti Detective): 100,000+ users, 1M+ failures detected. However, real-time in-process monitoring only

- PrintWatch: ML-based in-process defect detection. Post-print bed check feature is in beta

- BedCheckAI: Checks whether the bed is clear. Not quality inspection

- Bambu Lab Built-in Camera: NPU-based spaghetti detection. In-process only

- SimplyPrint: Cloud-based spaghetti/warping/blobbing detection. Again, in-process only

These tools are highly effective at “stopping failed prints mid-way.” However, none of them offer the capability to “automatically determine whether a finished part meets quality standards.”

Limitations of Industrial Solutions

Industrial solutions such as Hexagon’s QUINDOS and GOM Inspect are designed for CMM and 3D scanning-based dimensional measurement—not for visual surface defect detection from camera images. Similarly, Instrumental’s electronics manufacturing inspection system and Landing AI’s visual inspection platform target traditional manufacturing lines, not additive manufacturing-specific defect patterns.

In short, “post-print visual inspection for 3D printing” is a clearly defined open gap in the current ecosystem.

Practical Guide: Building a $150 Raspberry Pi Inspection Station

Hardware Configuration

| Component | Recommended Product | Est. Price | Rationale |

|---|---|---|---|

| SBC | Raspberry Pi 5 (8GB) | ~$80 | ARM Cortex-A76 2.4GHz, NCNN runtime compatible |

| Camera | USB Webcam (1080p+) | ~$30 | Autofocus recommended |

| LED Lighting | Ring Light (5000K) | ~$15 | Consistent lighting conditions |

| Camera Mount | Flexible Arm | ~$10 | Top-down overhead capture |

| microSD Card | 64GB A2 Class | ~$15 | NCNN model storage |

| Total | ~$150–200 |

Inference Performance Comparison

CPU-only (NCNN runtime): YOLOv8n / YOLO11n @ 640×640: ~10–12 FPS. Power consumption: ~5W. Additional cost: $0.

Hailo-8L AI Kit: YOLOv8n @ 640×640: ~136.7 FPS. Computing power: 13 TOPS. Additional cost: ~$70.

For post-print visual inspection, you simply capture and analyze a single image of the finished part. Real-time video stream processing is unnecessary, and ~100ms per inference on CPU-only is perfectly practical. The AI Kit investment is only justified in high-throughput environments where inspection images arrive continuously from 5+ printers in a print farm.

Standardizing the Capture Environment

Computer vision accuracy depends more on “input image quality” than model performance. Standardize your inspection station by fixing these conditions:

- Fixed Lighting: Position a ring light (5000K, CRI 90+) coaxially with the camera to minimize shadows

- Uniform Background: Place a white or black solid matte on the bed. PEI bed reflections introduce noise

- Fixed Camera Distance: Mount vertically 200–250mm above bed center. Frame the entire build volume

- Manual White Balance: Disable auto-adjustment and manually set to 5000K

Ensuring these conditions remain identical for every capture guarantees detection accuracy reproducibility.

Software Stack Configuration

Implementing the Inspection Flow

import time

from pathlib import Path

from dataclasses import dataclass

@dataclass

class DefectResult:

category: str

confidence: float

bbox: tuple # (x1, y1, x2, y2)

class PostProcessInspector:

"""

Executes post-print visual inspection.

Triggered by OctoPrint PrintDone event.

"""

def __init__(self, config_path: str):

self.config = self._load_config(config_path)

self.model = self._load_ncnn_model(

self.config["inference"]["model"]

)

def capture_image(self) -> Path:

"""

Wait for cooling after print completion,

then capture a single high-resolution image.

"""

delay = self.config["capture"]["delay_after_print"]

time.sleep(delay)

image_path = Path("/tmp/inspection_latest.jpg")

self._capture_from_usb(image_path)

return image_path

def run_inspection(self) -> dict:

"""

Execute full inspection pipeline.

Returns: {pass/fail, defect list, confidence}

"""

image = self.capture_image()

detections = self.model.predict(image, conf=0.25)

defects = []

for det in detections:

defect = DefectResult(

category=det.class_name,

confidence=det.confidence,

bbox=det.bbox

)

defects.append(defect)

severity = self._classify_severity(defects)

decision = self._make_decision(severity)

return {

"decision": decision,

"defects": defects,

"severity": severity,

"image_path": str(image)

}

def _classify_severity(self, defects: list) -> str:

"""

3-level severity classification:

- CRITICAL: delamination, layer_shift (conf > 0.8)

- WARNING: under_extrusion, warping

- INFO: stringing, blobs (cosmetic only)

"""

for d in defects:

if d.category in ["delamination", "layer_shift"] and d.confidence > 0.8:

return "CRITICAL"

if d.category in ["under_extrusion", "warping"]:

return "WARNING"

return "INFO" if defects else "PASS"

def _make_decision(self, severity: str) -> str:

return {

"CRITICAL": "FAIL",

"WARNING": "REVIEW",

"INFO": "PASS",

"PASS": "PASS"

}[severity]Defect Severity Classification and Automated Judgment

The key implementation point is the three-tier severity classification. Rather than binary pass/fail, defects are categorized into CRITICAL (structural defects requiring immediate rejection), WARNING (functional defects requiring human review), and INFO (cosmetic defects that are acceptable). This graduated approach prevents both over-rejection and under-detection—maximizing the practical value of automated inspection.

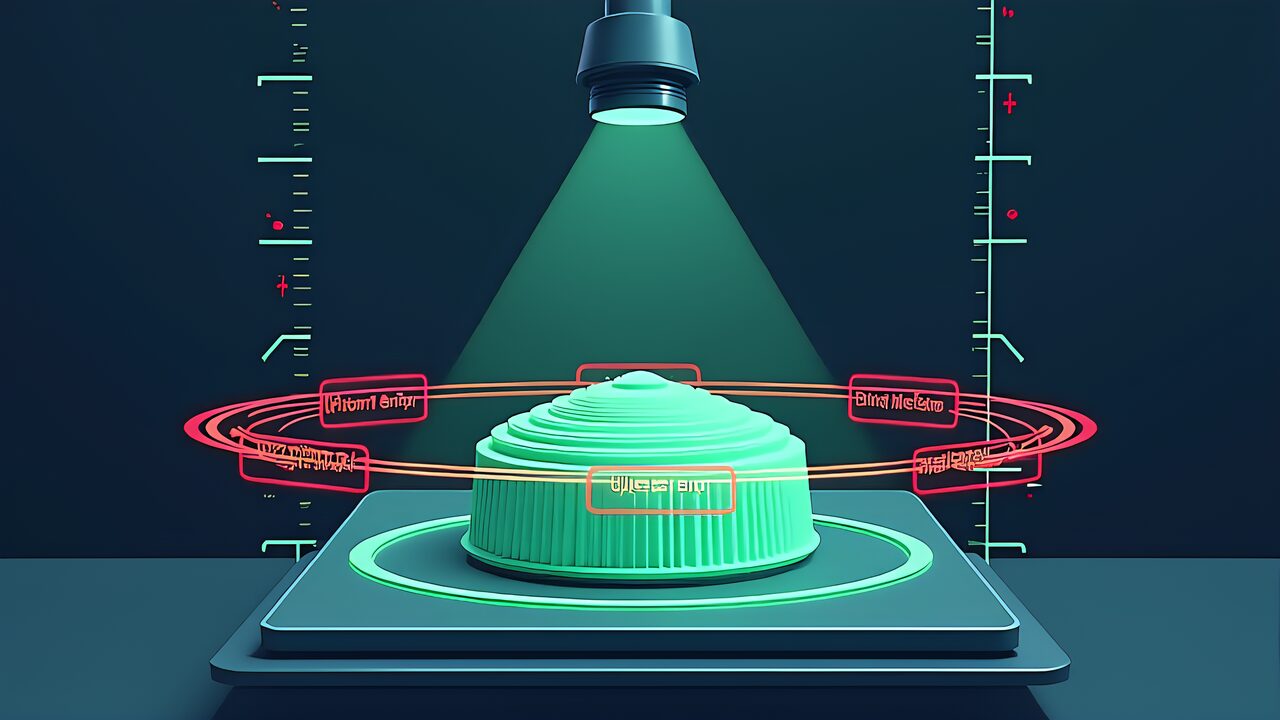

Defect Classification Accuracy: Detection Performance Across 7 Major Categories

Defining the 7 Categories

The major defect categories targeted by an AI visual inspection for 3D printing system are consolidated into the following seven, drawing from both academic literature and practical experience.

| # | Defect Category | Cause | Detection Difficulty |

|---|---|---|---|

| 1 | Layer Shift | Belt looseness, stepper skip | Medium (easy above 0.3mm) |

| 2 | Under-Extrusion | Filament feed shortage, heat creep | High (fine gaps) |

| 3 | Warping | Uneven cooling shrinkage | Medium (bottom warping hard to see top-down) |

| 4 | Stringing | Improper retraction settings | Low (thin threads, high contrast) |

| 5 | Spaghetti | Bed adhesion failure, collapse | Low (large-scale anomaly) |

| 6 | Over-Extrusion / Blobs | Excess flow, Z-seam | Medium (recurring patterns) |

| 7 | Delamination | Inter-layer adhesion failure | Highest (sub-surface defect) |

Note that Elephant’s Foot and Z-seam visibility are practical concerns but not yet well-represented in major ML datasets, so they are excluded from the primary 7-category classification.

Detection Strategy by Category

Addressing Texture Anomalies and Structural Defects

High-contrast defects (stringing, spaghetti): YOLO-based object detection excels here. Clear boundaries against the background yield mAP50 above 90%. Even lightweight YOLOv8n delivers sufficient accuracy for these defect types.

Texture anomalies (under-extrusion, over-extrusion): Segmentation approaches are more effective than object detection. These defects manifest as deviations from normal layer patterns and are better detected at the pixel level. The improved YOLOv8’s 97.5% mAP50 partially results from leveraging texture features.

Structural defects (delamination, warping): The most challenging category for single-image detection. Multi-angle imaging, structured lighting, or integration with post-print measurements (weight, dimensional comparison) are needed. ViT’s superiority here stems from its ability to capture global context via attention mechanisms.

Current Status of Industry Standards

The current international standard for AM quality is ISO/ASTM 52902:2023 (Additive manufacturing — Test artifacts — Geometric capability assessment). However, this standard focuses on dimensional accuracy verification and does not define frameworks for ML-based visual inspection or severity classification.

This means the 7-category classification and severity criteria presented here are based on practical experience and academic research—not yet on international standards. This “standardization gap” is both a risk and an opportunity: early implementations define the de facto standards.

Print Farm Integration: OctoPrint × Inspection Agent Architecture

Leveraging the OctoPrint Event System

OctoPrint fires events at each stage of the printing process. The key to integrating an AI visual inspection for 3D printing system into a print farm is hooking into the PrintDone event.

import octoprint.plugin

import requests

import json

class PostPrintInspectionPlugin(octoprint.plugin.EventHandlerPlugin):

"""

OctoPrint plugin that triggers AI visual inspection

when printing completes.

"""

def on_event(self, event, payload):

if event == "PrintDone":

self._trigger_inspection(payload)

def _trigger_inspection(self, payload):

import time

time.sleep(self._settings.get(["cooling_delay"]))

response = requests.post(

"http://localhost:5000/inspect",

json={

"printer_id": self._settings.get(["printer_id"]),

"job_name": payload.get("name", "unknown"),

"timestamp": payload.get("time", 0)

}

)

result = response.json()

self._process_result(result)

def _process_result(self, result):

decision = result["decision"]

if decision == "FAIL":

self._send_mqtt_alert(result)

self._logger.warning(

f"INSPECTION FAILED: {result['defects']}"

)

elif decision == "REVIEW":

self._send_mqtt_alert(result, level="warning")

else:

self._log_result(result)Coexistence with the Existing Ecosystem

The core design principle is that it simply adds a “post-print” phase to the existing OctoPrint ecosystem, coexisting perfectly with in-process monitoring (Obico, etc.). During printing, Obico monitors for spaghetti; after successful completion, the inspection agent executes quality judgment. This creates a two-stage quality assurance system.

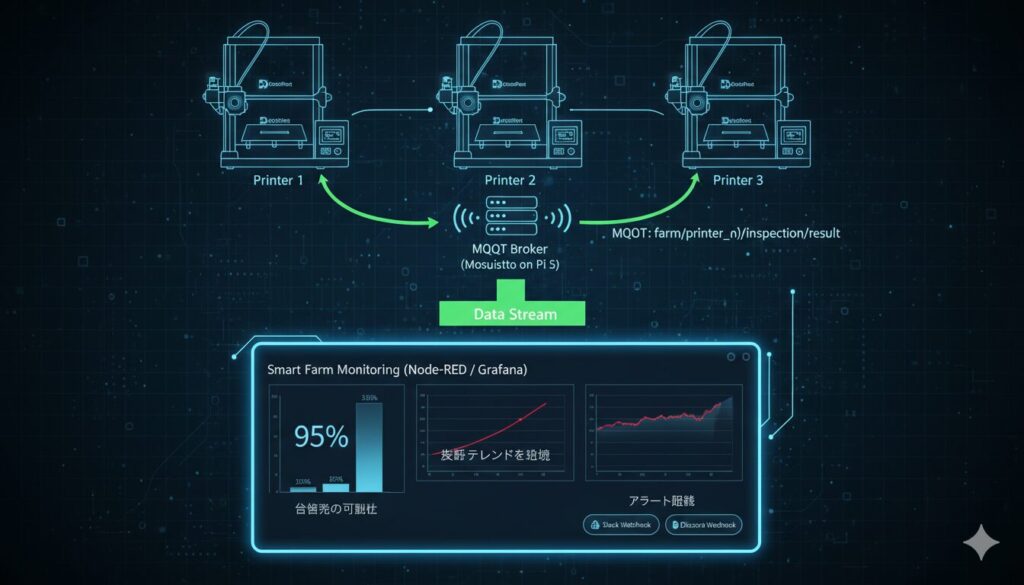

MQTT-Based Farm Integration Architecture

In print farm environments, inspection results from multiple printers need centralized management. MQTT Pub/Sub communication is ideally suited for this requirement.

Each Raspberry Pi per printer (or a shared Pi 5) executes inspections and publishes results to an MQTT broker. The dashboard visualizes per-printer pass rates, defect type frequency distributions, and time-series trends.

For instance, if “Printer 2 consistently flags stringing defects,” the dashboard enables immediate diagnosis: retraction settings, Bowden tube condition, or nozzle wear. AI inspection generates actionable data for continuous improvement—not just pass/fail decisions.

Positioning Within the Existing Ecosystem

| Plugin/Tool | Target Phase | Detection Target | Relationship |

|---|---|---|---|

| Obico | In-process | Spaghetti, collapse | Fully complementary |

| PrintWatch | In-process | Various defects | Complementary (bed check beta) |

| BedCheckAI | Pre-print | Bed clearance | Unrelated to inspection |

| PiNozCam | In-process | Local Pi inference | No post-print support |

| This System | Post-print | 7-category quality judgment | Fills the ecosystem gap |

Post-print quality inspection is a clearly missing function in the existing ecosystem. This system adds a “quality gate” at the final stage of the pipeline.

Conclusion: Automated Quality Control Transforms 3D Printing into Manufacturing

What is missing for 3D printing to graduate from “hobby” to “manufacturing”? It’s not printer speed, material strength, or software features. It’s a quality assurance system.

Three Technical Foundations

The AI visual inspection for 3D printing approach described in this article rests on three facts:

Visual inspection is structurally inadequate. 70–85% reproducibility, missed micro-defects, inability to scale. This is a physical limitation of the human visual system, not an inspector competency issue.

Computer vision has reached practical maturity. YOLOv11m precision 91.28%, improved YOLOv8 mAP50 97.5%, ViT accuracy 95.98%. These numbers surpass human visual inspection. Large-scale datasets like 3D-ADAM are eliminating model training bottlenecks.

Post-print inspection is a clear open gap. Obico, PrintWatch, Bambu Lab cameras, SimplyPrint—all specialize in in-process monitoring. None offer post-print quality judgment. This article presents a concrete architecture to fill that gap.

A Quality Revolution Starting at $150

All of this can be built with a $150 Raspberry Pi and an open-source stack. No expensive industrial equipment, no cloud subscriptions, no proprietary lock-in. Just a camera, a board computer, and a trained model.

The Standardization Gap and Future Outlook

ISO/ASTM 52902:2023 doesn’t yet cover ML-based visual inspection frameworks. The industry is in a phase where “whoever implements first, sets the standard.” Every print farm operator building this system today contributes to tomorrow’s quality standards.

The era of humans squinting at 3D printed parts under desk lamps is ending. Not because humans are incapable—but because machines are better at consistency. And in manufacturing, consistency is everything.