3D Generative AI Foundation Models 2026: How Shape-Understanding AI Is Changing 3D Printing

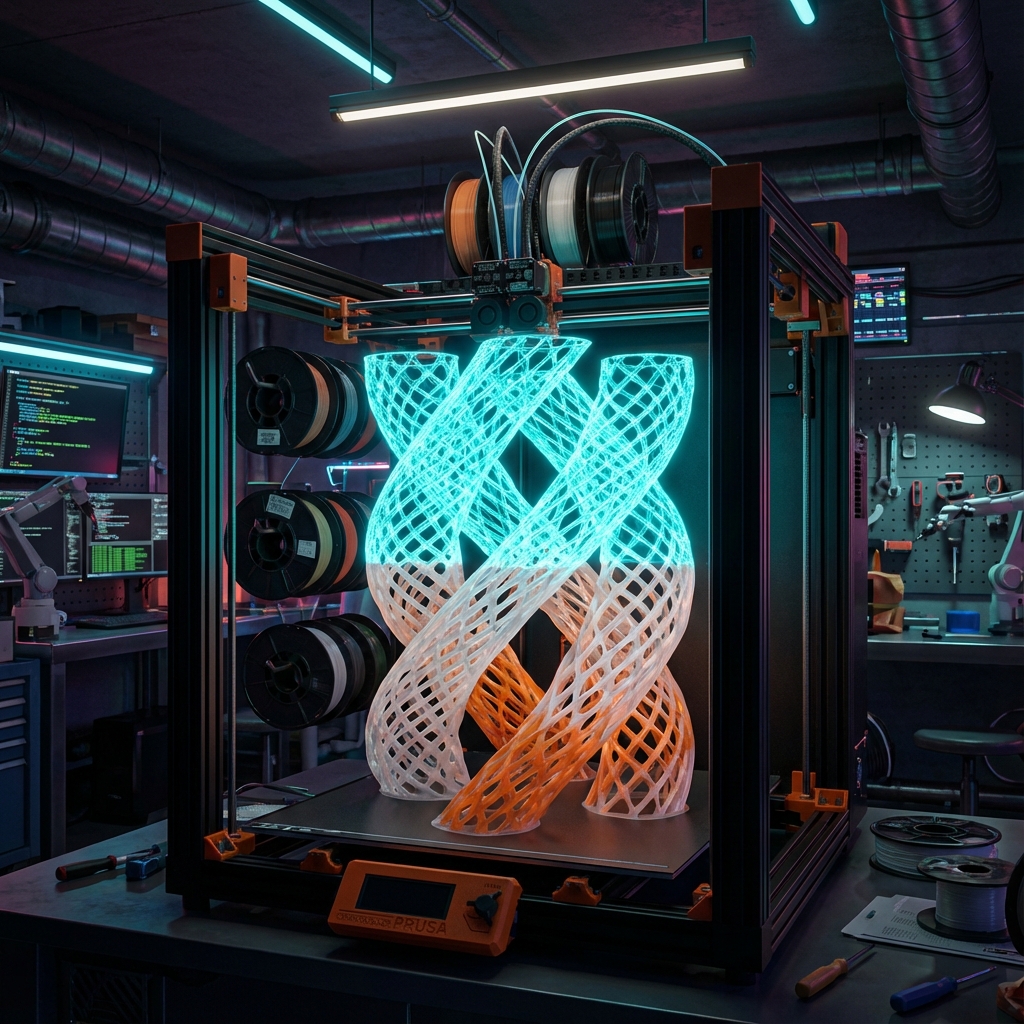

Type a prompt in Meshy and a 3D model appears in seconds. Upload an image to Tripo and a plausible solid comes back. In 2026, Text-to-3D is no longer a novelty. But if you’ve ever sent that output directly to a 3D printer, you know the reality: “It looks great. But it won’t print.”

In our previous series “AI x 3D Print Business Applications,” we outlined a business roadmap using AI tools. Starting this week, the “AI x 3D Print Cutting-Edge Technology” series turns to the technical depths. Part 1 dissects the current state and limitations of 3D generative AI foundation models.

- The “Quality Wall” of Text-to-3D — Why Generated Models Can’t Be Printed

- Three Design Philosophies of 3D Foundation Models

- Open-Source 3D Foundation Models — TripoSR, InstantMesh, LGM Technical Analysis

- Meshy, Tripo, Autodesk — 3D Generative AI Foundation Model Comparison

- “Printability” — The Ultimate Test for 3D Generative AI

- 3D Printer Material Compatibility — Shape Patterns AI Struggles With

- What Makers Should Do Now — GPU Investment and Learning Roadmap

- Series Preview: AI x 3D Print Cutting-Edge Technology Overview

The “Quality Wall” of Text-to-3D — Why Generated Models Can’t Be Printed

To understand the current state of 3D generative AI foundation models 2026, you first need to know why generative model output can’t withstand printing. Problems fall into three categories.

Wall 1: Non-Manifold Meshes. Meshes output by generative AI frequently contain holes, self-intersections, and zero-thickness faces. Slicers can’t correctly slice these, and print paths break down. Community testing shows that sending Meshy output directly to OrcaSlicer produces slice errors 3 out of 5 times.

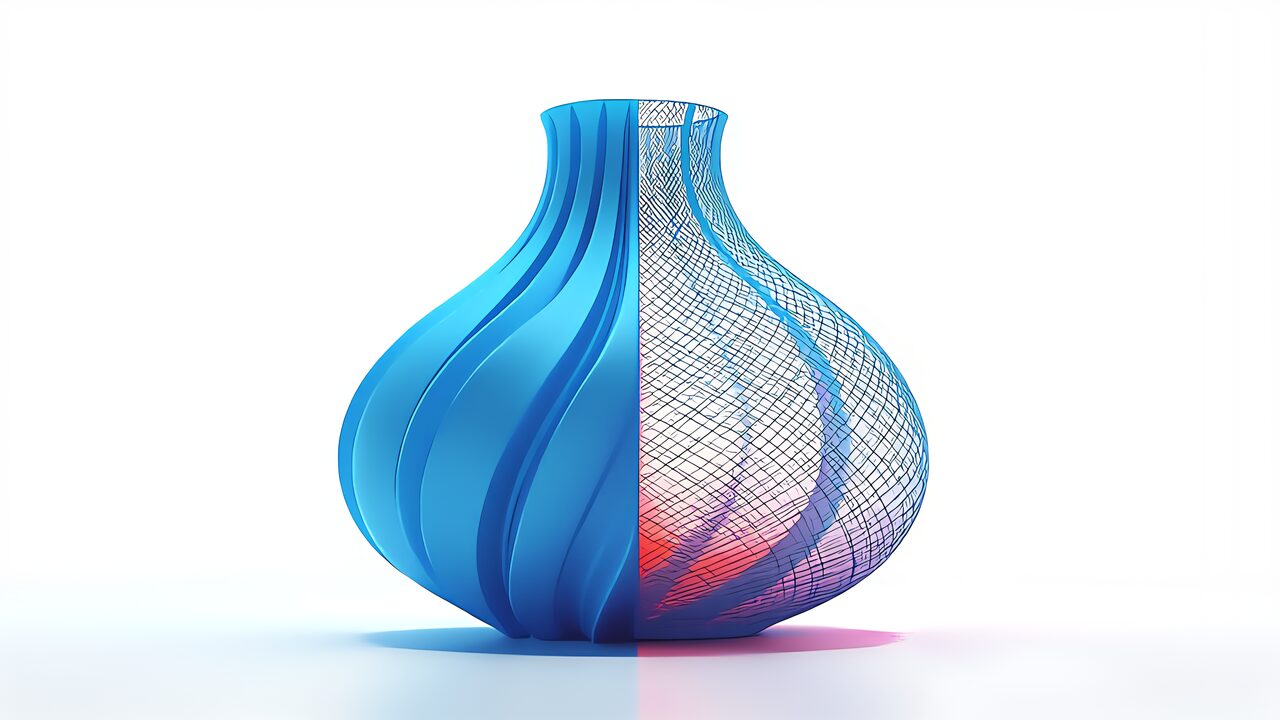

Wall 2: Insufficient Wall Thickness. Standard FDM nozzle diameter is 0.4mm, requiring minimum wall thickness of 0.8mm (2 layers). However, image-based 3D generation models optimize for “appearance,” casually generating walls under 0.1mm and floating decorations. These look beautiful on screen but cannot exist in the physical world.

Key Point

Wall 3: Overhangs and Structural Strength. Overhangs exceeding 45 degrees require support material. Current 3D generative AI doesn’t consider print-time support structures at all. The result: surface roughness after support removal, or impossible-to-support geometries.

In particular, the root cause of these problems is that current 3D generative AI learns “visual appearance” rather than “geometric shapes.” Training data is renderings and photographs — images, not engineering data.

Three Design Philosophies of 3D Foundation Models

Lineage 1: Image-Based Conversion (Image-Lifting)

Converts 2D images to 3D by estimating depth maps and generating meshes. Fast but inherently limited because 3D geometry is inferred from 2D information. Reverse surfaces, occluded regions, and internal structures remain guesswork.

Lineage 2: 3D-Native Diffusion Models

Directly generates 3D representations through diffusion processes trained on 3D data. Better geometric understanding than image-lifting, but still optimizes for visual fidelity rather than manufacturing constraints.

Lineage 3: CAD-Native Foundation Models (Neural CAD)

Furthermore, foundation models trained on CAD objects and industrial processes that directly reason about engineering geometry. Autodesk’s “Neural CAD for Geometry,” planned for Fusion integration, generates first-class CAD geometry from text, sketches, or images. Autodesk claims it “automates 80–90% of work designers normally do.” The decisive advantage: output is in B-Rep (Boundary Representation) and NURBS — CAD-native formats that are parametric, editable, and naturally reflect manufacturing constraints.

However, Neural CAD is not yet commercially released as of March 2026. Its true value remains unknown until Autodesk Fusion and Forma integration is complete.

Open-Source 3D Foundation Models — TripoSR, InstantMesh, LGM Technical Analysis

Specifically, commercial tools aren’t the only 3D generative AI foundation models. Open-source models released from late 2024 through 2025 have made it realistic for makers to run 3D generation on home GPUs.

TripoSR — High-Speed 3D Reconstruction from Single Images

Co-developed by Stability AI and Tripo AI Research Lab, TripoSR generates 3D meshes from a single image in under 0.5 seconds. The core architecture is an image encoder (DINOv1-based ViT) to triplane NeRF (Neural Radiance Field) conversion pipeline. Triplane decomposes 3D space into three 2D feature maps (XY, XZ, YZ planes), dramatically reducing memory consumption compared to full 3D voxel representations.

Additionally, a notable practical point: it runs on GPUs with just 6GB VRAM (RTX 3060 class). Output is meshified via marching cubes, directly exportable as STL/OBJ. However, output resolution is equivalent to 256³ voxels — fine details get crushed. Facial expressions on figurines and grooves under 0.5mm cannot be reproduced.

InstantMesh — Multi-View Diffusion + LRM Integration

Co-developed by Tencent and HKUST, InstantMesh combines multi-view image generation via Zero123++ with Large Reconstruction Model (LRM). First generates 6 viewpoint images from a single input, then LRM reconstructs the 3D mesh. The two-stage approach achieves higher geometric accuracy than single-stage methods.

LGM (Large Gaussian Model) — Gaussian Splatting for 3D

Applies 3D Gaussian Splatting technology for 3D generation from single images. Particularly fast at inference, generating 3D representations in approximately 5 seconds. Produces high-quality visual results but converting Gaussian representations to printable meshes requires additional post-processing.

Open-Source Model Practicality Summary

| Model | Speed | Quality | VRAM Req. | Print Readiness |

|---|---|---|---|---|

| TripoSR | Ultra-fast (0.5s) | Medium | 6GB | Low (requires mesh repair) |

| InstantMesh | Fast (10-30s) | High | 12GB+ | Medium (better geometry) |

| LGM | Fast (5s) | High (visual) | 8GB+ | Low (Gaussian→mesh conversion needed) |

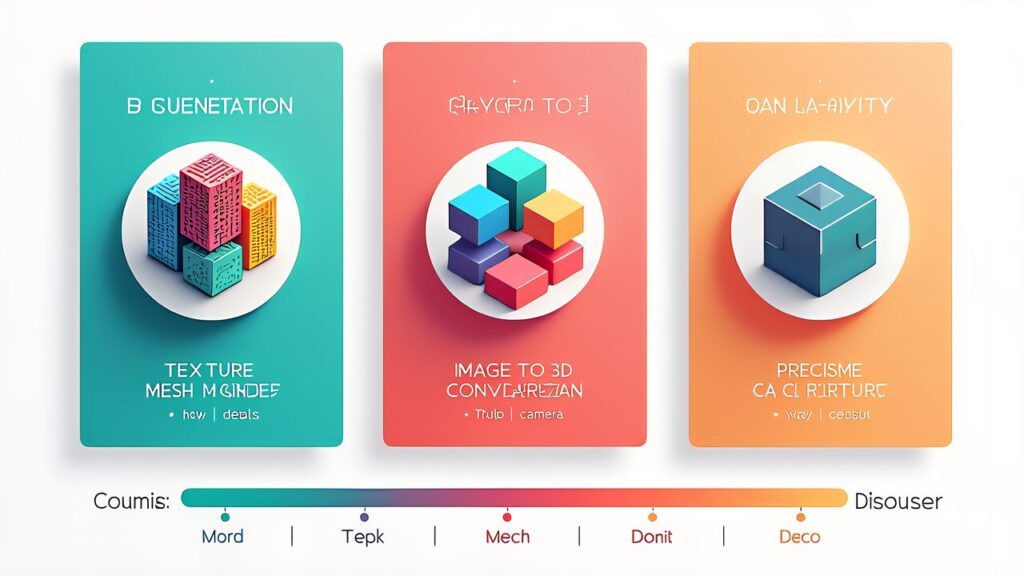

Meshy, Tripo, Autodesk — 3D Generative AI Foundation Model Comparison

Meshy 6 — Commercial Optimization of Transformer Diffusion Models

Meshy 6 introduced a 3D print conversion feature at CES 2026 that internally runs a mesh repair engine (non-manifold removal, automatic wall thickening, bottom flattening). Output formats include STL/OBJ/GLB. However, complex organic shapes (branching trees, lattice structures) still sometimes have incomplete repairs. Output meshes typically have 50K–200K vertices, within acceptable slicer processing range.

Key Point

Tripo — Deep Pipeline with Multi-Stage Refinement

Furthermore, Tripo specializes in image-to-3D with a pipeline covering segmentation, texturing, retopology, and rigging. Its multi-stage refinement iteratively refines coarse 3D meshes using Score Distillation Sampling (SDS) with 2D diffusion models. Tripo’s retopology engine auto-reduces high-polygon meshes (100K+ vertices) to 10K–30K while preserving shape. Basic plan starts at $12/month (approximately ¥1,908).

Print readiness assessment: Useful as a starting point for concept modeling, but SDS optimization prioritizes “appearance,” causing uneven wall thickness. Thin walls under 0.3mm frequently appear at concavities and junctions. Post-processing in Blender is mandatory for FDM printing.

Autodesk Neural CAD — A Dimension-Breaking B-Rep Approach

A foundation model trained on professional design data that directly generates CAD-native geometry from text/sketches/images. Unlike mesh-based generators, Neural CAD outputs B-Rep (Boundary Representation) with NURBS surfaces — parametric, editable, and manufacturing-aware. This is a fundamentally different approach, but as of March 2026, it’s still pre-release.

Key Point

“Printability” — The Ultimate Test for 3D Generative AI

Key Point: The gap between “visually beautiful 3D model” and “physically printable object” remains the biggest challenge. Current AI optimizes for renders, not for manufacturing. Until models are trained on engineering data with physical constraints, human post-processing remains essential.

3D Printer Material Compatibility — Shape Patterns AI Struggles With

Tall Thin Spires

3D generative AI frequently creates Gothic or fantasy models with spires tapering to under 1mm. In FDM, extremely small per-layer print areas mean the previous layer doesn’t cool before the next is deposited, causing melting and deformation. For PLA, this becomes pronounced below 2mm tip diameter; ABS/ASA is worse at 3mm.

Mitigation: Set slicer “minimum layer time” to 8+ seconds. In OrcaSlicer, control via “Slow down if layer print time is below.” Fundamentally, remesh generated output in Blender to 2mm+ tip diameter.

Large Flat Surfaces (The Warping Trap)

On the other hand, generative AI tends to create flat surfaces exceeding 50mm×50mm for architectural and tabletop terrain models. In FDM, large flat surfaces cool unevenly, causing edge warping. PLA warps 0.5–1mm at 100mm×100mm; ABS warps noticeably at 50mm×50mm.

Mitigation: Heated build plate (PLA: 60°C, ABS: 100–110°C) plus 5mm brims. Add 0.5mm ribs to the underside of large flat surfaces.

Excessive Bridging

Generative AI favoring organic shapes frequently uses thin-surface “bridges” connecting pillars. FDM bridging performance depends heavily on material and distance. PLA manages ~30mm bridges at 100% fan. TPU sags beyond 10mm. PETG produces stringing.

Thin Wall Intersections

Where multiple thin walls meet at angles, FDM can’t properly deposit filament, creating weak joints. Generated models frequently feature decorative elements with 0.4mm walls intersecting at complex angles.

What Makers Should Do Now — GPU Investment and Learning Roadmap

Hardware: GPU Becomes the Constraint

Running local 3D generation models requires NVIDIA GPUs. TripoSR works with 6GB VRAM (RTX 3060), but higher-quality models like InstantMesh need 12GB+ (RTX 4070 Ti or better). For serious local generation, RTX 4090 (24GB) is the sweet spot.

Key Point

Printer: Models Capable of Complex Geometry

Multi-material printers like Bambu Lab A1 mini or P1S handle complex support structures better. Resin printers (ELEGOO Saturn series) excel at fine details that FDM struggles with.

Skills: 3D Repair Mastery Is Top Priority

Learning Blender mesh repair (non-manifold detection, wall thickening, remeshing) is the most immediately valuable skill. Tools like Meshmixer and the 3D Print Toolbox in Blender are essential for bridging the gap between AI generation and physical printing.

Series Preview: AI x 3D Print Cutting-Edge Technology Overview

This series will cover: real-time print correction (Part 2), generative design with FEA (Part 3), AI slicers (Part 4), multi-material optimization (Part 5), digital twins (Part 6), and a technology roundup (Part 7).

For more information, visit arXiv: 3D Generative AI.