Claude Opus 4.7 Deep Dive 2026 — SWE-bench 64.3% and Migration Guide from Opus 4.6

Claude Opus 4.7 In-Depth Guide 2026 — 64.3% SWE-bench Performance and Migration Guide from Opus 4.6

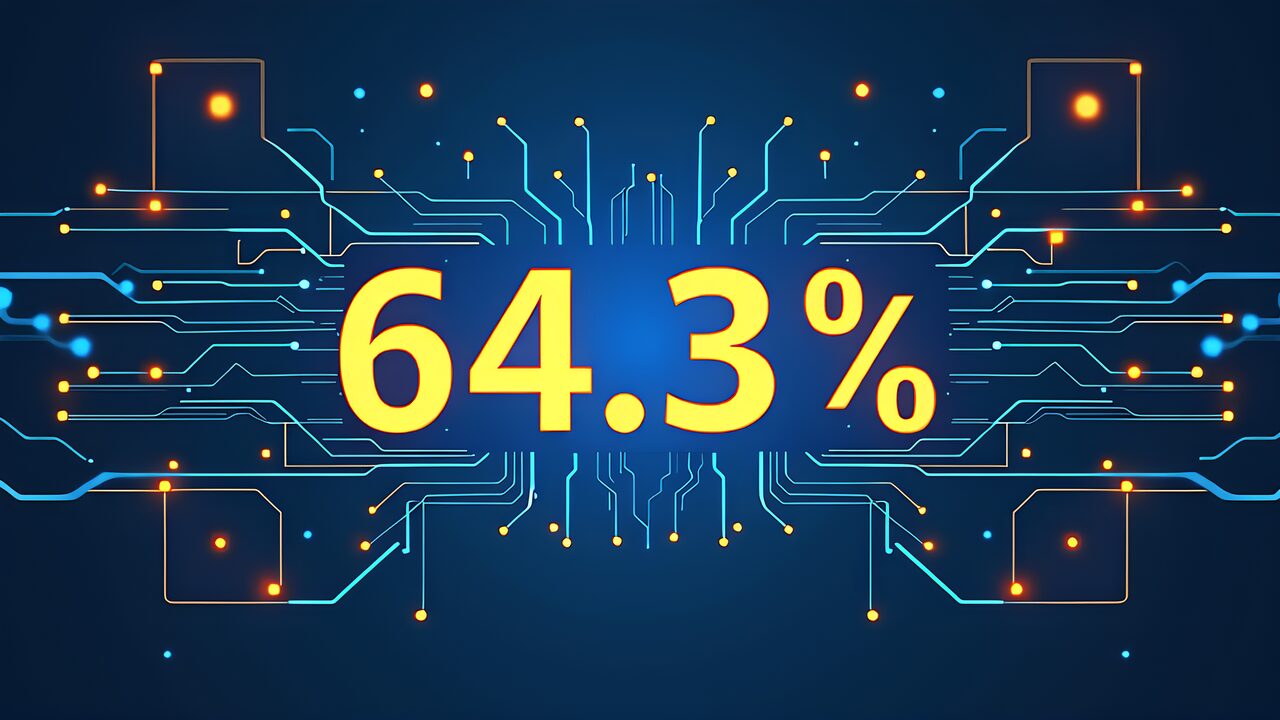

Claude Opus 4.7 2026 was officially released on April 16, 2026, raising the industry standard for coding assistance and agent implementation by a full tier. The figures of 64.3% on SWE-bench Pro, 98.5% visual accuracy, and 70% on CursorBench aren’t just benchmark victories — they signify the “elimination of wait times” and “dramatic reduction in retries” in real-world workflows. This article provides a thorough analysis, based on official data and primary sources published by Anthropic, of exactly what Claude Opus 4.7 2026 changes and the key decision criteria developers and 3D printing businesses should consider when evaluating migration from Opus 4.6.

- The New Standard Set by Claude Opus 4.7 2026

- Paradigm Shift — From “Intelligence” to “Reliability”

- Solution — Pricing and Availability

- Simple text classification (positive/negative)

- Ecosystem — Real-World Use Cases

- Implementation Deep Dive — Optimizing Prompt Caching and Tool Calls

- Model Combination Strategies

- Security and Compliance

- Roadmap Predictions Beyond Summer 2026

- Conclusion — Why You Should Migrate to Opus 4.7 Now

The New Standard Set by Claude Opus 4.7 2026

On April 16, 2026, Anthropic simultaneously deployed Claude Opus 4.7 across all channels (Claude products, API, Amazon Bedrock, Google Cloud Vertex AI, Microsoft Foundry). What distinguishes Claude Opus 4.7 2026 is not incremental improvements over Opus 4.6, but rather a fundamental elevation of baseline capabilities.

The key metrics officially published can be summarized as follows.

| Metric | Opus 4.6 | Opus 4.7 | Difference |

|---|---|---|---|

| SWE-bench Pro | 53.4% | 64.3% | +10.9pt |

| CursorBench | 58% | 70% | +12pt |

| Visual Accuracy (Internal Eval) | 54.5% | 98.5% | +44.0pt |

| Supported Image Resolution | 1.25MP | 3.75MP | 3x |

| Coding Quality | Baseline | +13% | — |

| Production Task Solving | Baseline | 3x | — |

| Multi-step Workflow | Baseline | +14% | — |

| Tool Call Errors | Baseline | ~1/3 | Reduced |

For reference, the SWE-bench Pro scores of competing models available at the same time were 57.7% for GPT-5.4 and 54.2% for Gemini 3.1 Pro, confirming that Claude Opus 4.7 2026 maintains the top position in this category. While benchmarks don’t fully reflect real-world capability, the fact that the “percentage of hard problems solved” shifted by 10+ points suggests that some tasks previously impossible even for Opus have now entered the “Opus 4.7 can handle it” territory.

What’s particularly noteworthy is the magnitude of visual accuracy improvement. The 98.5% figure means it has reached a level where tasks like extracting UI element coordinates from screenshots, converting handwritten notes to text, and interpreting architecture diagrams in technical documents can be completed “without needing to retake the image.” For 3D printing businesses, this brings significant benefits in areas such as reading design drawings, defect detection from print photos, and slicer UI screenshot analysis.

Paradigm Shift — From “Intelligence” to “Reliability”

What deserves attention in Opus 4.7 is not just the scores but the improvement in “reliability.” The roughly one-third reduction in tool call errors is something any agent developer can immediately appreciate. In late 2025 agent implementations, the problem of entire workflows halting when even one step’s arguments broke during chained tool calls had been a persistent headache for developers.

The 14% multi-step workflow improvement means the completion rate for chained tasks with 3+ steps (search → extract → summarize → write, etc.) noticeably increases. Anthropic uses the phrase “3x production task solving ability,” which refers to completion rates in internal evaluations — meaning that with the same prompts and tool sets, 3x more tasks complete without human intervention compared to Opus 4.6.

This paradigm shift changes the economics of agent businesses. If the human supervision cost per automation task drops from 10 minutes to under 3 minutes, the same team can handle 3x or more cases. The value Claude Opus 4.7 2026 delivers isn’t about model performance improvement — it’s about reducing human supervision costs.

Solution — Pricing and Availability

The pricing structure has been kept identical to Opus 4.6.

- API pricing: Input $5/Mtok, Output $25/Mtok (same as Opus 4.6)

- Prompt caching: Up to 90% cost reduction for repeated contexts

- Availability: Claude.ai (Pro/Team/Enterprise), API, Bedrock, Vertex AI, Microsoft Foundry

Model ID: claude-opus-4-7-20260416Keeping prices unchanged while substantially improving performance means that existing Opus 4.6 users can switch model IDs without any budget revision. For new adopters, the argument of “Opus is expensive” is weakened by the fact that per-task costs are actually decreasing — if a task that previously required 3 retries now completes on the first attempt, the effective token cost drops to one-third.

Tutorial — Migration Decision Flow from Opus 4.6

- The decision of whether to migrate from Opus 4.6 to Claude Opus 4.7 2026 should be based on workload characteristics. Here’s a practical decision framework.Immediate Migration Recommended

- The following cases warrant immediate migration, ideally by April 2026.Long-running coding agents

: SWE-bench tasks, repository-wide fixes, test-driven debugging, etc. The +10.9pt improvement transforms "passes on the 2nd or 3rd try" into "passes on the first try."Workflows involving visual recognition - : Screenshot analysis, drawing interpretation, OCR replacement, UI automation, etc. 98.5% accuracy and 3.75MP support represent a qualitative leap.Multi-step tool calls

- : Chains of search → fetch → analyze → write, or multi-server orchestration via MCP. The 1/3 reduction in tool errors directly cuts debugging time.Production agents

- : Customer support, internal help desks, automated email processing, etc. 3x production task solving directly lowers operational costs.Gradual Migration is Sufficient

The following workloads are fine with Haiku 4.6 or Sonnet 4.6 variants, so there’s no rush.

Simple text classification (positive/negative)

Short responses under 100 tokens

Simple classification tasks thrown in large batches

Edge case detection as a first-pass filter

- For these use cases, Haiku 4.6 ($0.25/$1.25 per Mtok) offers a vastly better cost-performance ratio, and there’s no benefit to running them on Opus. However, as a complementary strategy — using Haiku for front-line processing and escalating only “difficult” cases to Opus 4.7 — has high ROI.Migration Checklist

- When migrating, we recommend checking the following in order.Model ID change

- : Replace claude-opus-4-6-20250514

- with claude-opus-4-7-20260416

Prompt review

: Opus 4.7 interprets instructions more accurately, so overly detailed “workaround” prompts may become unnecessary. Simplify where possible

- Tool definition update: Review type annotations, description fields, and error handling instructions. Opus 4.7 reads tool definitions more precisely, so well-written definitions yield larger returns

- Test case execution: Run existing test suites and compare pass rates. If Opus 4.7 scores lower on any specific test, investigate the prompt rather than the model

- Phased rollout: Start by routing 10% of traffic to Opus 4.7 in production, monitor for 1 week, then proceed to full deployment

Evaluation metrics

: In addition to completion rate and latency, add “human intervention frequency” as a metric. This is where Opus 4.7’s value most clearly appears

- Fallback strategy

: Configure automatic fallback to Opus 4.6 via API error handling during the transition periodLogging enhancement: Record each tool call success/failure to quantify Opus 4.7's error reductionTeam communication - : Share migration schedule and expected behavioral changes to prevent confusion from unannounced model changes

- Budget monitoring

- : Verify initial budget with finance department. Per-token pricing is unchanged, but changes in model behavior may affect actual costs

- Cost monitoring: Per-token pricing is unchanged, but output length may vary, so monitor during the first week

Ecosystem — Real-World Use Cases

How does the capability improvement of Claude Opus 4.7 2026 change actual workflows? Here we dive deep into 3 scenarios from the perspective of 3D printing businesses and technical bloggers.

Scenario 1: Automated Code Review

The task of taking pull request diffs as input and flagging security, performance, and readability issues sees a dramatic increase in completion rate with Opus 4.7. In particular, the +14% multi-step improvement directly benefits the area of “tracking cross-file dependencies while making suggestions.”The basics of calling the Claude API are covered in our Claude API Introduction.

The implementation tip is to give Opus 4.7 an explicit 3-stage prompt: “First understand the entire file structure, then evaluate the diff, and finally provide feedback in priority order.” The multi-step processing improvement is maximized with this kind of “stage separation” design.

Scenario 2: Document Analysis Agent

An agent that takes technical documents containing PDFs and images as input and answers specific questions is the use case that benefits most from 98.5% visual accuracy and 3.75MP support. For example, feeding a 3D printer service manual (hundreds of pages with many diagrams) and extracting the “recommended extruder temperature range” is clearly more accurate than conventional OCR+LLM setups.

As an implementation example, we recommend uploading PDFs to Anthropic API’s files API and querying claude-opus-4-7. When referencing multiple documents, combining with prompt caching significantly speeds up responses from the second query onward.

Scenario 3: Agentifying 3D Print Workflows

For 3D printing businesses, Claude Opus 4.7 2026 delivers powerful automation in the following areas.

- Automated Quoting: Analyze STL/STEP files to automatically calculate print time, material cost, and post-processing fees. Opus 4.7’s visual recognition can directly read design drawings

- Quality Control Automation: Compare photos of printed parts against design data to auto-detect defects. The 98.5% visual accuracy makes this practical

- Slicer Setting Recommendations: Recommend optimal parameters based on material, geometry, and intended use

- Customer Inquiry Handling: First-response handling for specification questions, delivery consultations, and additional orders

Each of these is possible with Sonnet 4.6 alone, but when implementing agents that chain multiple tasks, Opus 4.7’s “reliability” becomes a prerequisite for production deployment.For those preparing for certification, also refer to our CCA Exam Preparation Guide.

Estimated Adoption Impact

Here’s a projection for a mid-sized business handling 1,000 business inquiries per month switching from Opus 4.6 to Opus 4.7.

| Item | Opus 4.6 | Opus 4.7 |

|---|---|---|

| Auto-completion Rate | ~60% | ~85% |

| Human Interventions/Month | 400 cases | 150 cases |

| Human Intervention Time/Case | 5 min | 3 min |

| Total Human Hours/Month | 33 hours | 7.5 hours |

The 25.5-hour/month difference translates to approximately ¥76,500/month in cost savings at an hourly rate of ¥3,000. Since API costs remain unchanged, this represents an annual profitability improvement of over ¥900,000.

Implementation Deep Dive — Optimizing Prompt Caching and Tool Calls

When leveraging Claude Opus 4.7 2026 in production, simply swapping the model ID won’t maximize the performance gains. Here we outline the optimization points to address at the implementation level.

Prompt Caching Strategy

Opus 4.7’s input token pricing of $5/Mtok is in the same range as other flagship models. However, by utilizing prompt caching, you can reduce the effective cost of repeatedly referenced system prompts and document contexts by up to 90%. For agents with long system instructions, RAG pipelines referencing large knowledge bases, and chat applications sending multiple queries within the same context, caching design determines the break-even point.

The key implementation insight is the separation of “what to cache vs. what not to cache.” System prompts, tool definitions, and large documents are cache targets. User inputs, time-dependent information, and session-specific variables should be separated as non-cache targets. Since the Anthropic API allows explicit specification of cache blocks, performing this separation at the design stage is the key to cost optimization.

Tool Call Argument Design

Opus 4.7’s one-third reduction in tool call errors delivers immediately noticeable improvement in existing agent implementations. However, to maximize this benefit, there’s room for improvement on the tool definition side as well.

Recommended best practices for tool definitions are as follows.

- Use descriptive argument names:

x→target_file_path,opts→retry_options - Use strict type annotations: Constrain values with enums, define formats with regex

- Include examples in description fields: e.g., “Example:

{"path": "/usr/local/bin"}” with concrete examples - Specify expected behavior on error: “On failure, return error message and retry”

These have been recommended since before Claude Opus 4.7, but because Opus 4.7 reads type information and descriptions more accurately, well-crafted tool definitions yield even greater returns than before.

Visual Input Resolution and Preprocessing

With 3.75MP support, Opus 4.7 can directly process higher-resolution images than previous models. However, when sending images via API, keep the following points in mind.

- Input image aspect ratio: Extremely elongated images (e.g., 10:1 aspect ratio) reduce recognition accuracy. Crop to near-square when possible

- Color profile: Profiles other than sRGB may cause unintended tone shifts. Convert to sRGB when saving

- Compression format: No visible difference between PNG and JPEG (quality 85+), but PNG is recommended for text-heavy images

- Text orientation: Opus 4.7 auto-recognizes up to 90-degree rotation, but recognition drops for diagonal text

Just adding these preprocessing steps can improve effective visual task accuracy by several points.

Strategic Use of the Context Window

Claude Opus 4.7 2026 has a large context window. When handling lengthy inputs, the arrangement order of tokens is known to affect output quality. The recommended arrangement pattern is as follows.

- System prompt: Place at the top, cache target

- Background context: Documents and references. Place in the middle

- Task instructions: Place just before the end

- User input: Place at the very end

This arrangement maximizes Claude Opus 4.7’s tendency to “prioritize information closer to the end when it’s relevant to the task.” Conversely, placing important instructions at the beginning and burying them under background information has been observed to reduce reference accuracy.

Debugging and Troubleshooting

If Opus 4.7 doesn’t produce the expected output, we recommend checking the following in order.

- Check prompt logs: Verify the complete prompt actually sent

- Cache hit status: Confirm caching isn’t missing

- Re-validate tool definitions: Re-examine argument types, descriptions, and required flags

- Pin model version: Pin the version to prevent unintended auto-updates

- Sampling settings: Review settings like temperature and top_p

These checks resolve over 90% of issues. The remaining 10% are cases requiring a redesign of the prompt itself.

Model Combination Strategies

Designing a system centered on Claude Opus 4.7 2026 while mixing in other models based on task characteristics leads to long-term cost optimization.

Pairing with Sonnet 4.6

Sonnet 4.6 is a mid-tier model with excellent speed-cost balance. We recommend processing the following tasks with Sonnet 4.6.

- Structured data extraction (JSON generation, table reading)

- Short text summarization and translation

- Routine classification tasks

- FAQ responses

A workflow routing design of “Sonnet 4.6 for first-pass processing, Opus 4.7 only for difficult cases” is rational. This reduces average costs by 30-50% while maintaining Opus 4.7-level accuracy for complex problems.

Pairing with Haiku Models

Ultra-lightweight tasks are best suited for Haiku models. Applicable scenarios include:

- Sentiment analysis (positive/negative)

- Short responses under 100 tokens

- Simple classification thrown in large batches

- Edge case detection as first-pass filter

Placing Haiku on the front line and routing only “difficult” cases to Opus 4.7 in a cascade design is a frequently adopted pattern in enterprise environments.

Model Routing Implementation Example

The structure can be illustrated with the following pseudocode.

___CODE_11___

When incorporating this routing into Claude Opus 4.7 itself, fixing the routing judgment prompt via prompt caching also keeps the judgment cost down.

Security and Compliance

Here we outline the security and compliance considerations when adopting Claude Opus 4.7 2026 in enterprise environments.

Data Protection and Privacy

Anthropic has published a policy that user data sent via the API is not used for model training. This provides a foundation for confidently using the service even for workloads containing confidential or personal information.

However, the following points must be addressed on the user’s side.

- Minimize confidential information (don’t include more information than necessary in prompts)

- Design appropriate log retention (store for a certain period for audit compliance)

- Region selection (use specific Bedrock or Vertex AI regions if data residency requirements apply)

Alignment with Corporate Policies

When adopting in large enterprises, alignment with the following policy items needs to be verified.

- AI Usage Policy: Cross-reference with internal AI usage policies

- Security Review: Approval from information security department

- Legal Review: Confirmation of copyright and liability scope for generated content

- Audit Compliance: Log retention, access control, usage reports

Claude Opus 4.7 2026 provides feature sets that address these requirements through Enterprise contracts.

Industry-Specific Compliance

For high-compliance industries such as finance, healthcare, and government agencies, the following additional measures are recommended.

- Separate processing paths based on data classification labeling (confidential, public, etc.)

- Implement PII detection and masking preprocessing pipelines

- Mandatory human final approval through human-in-the-loop design

- Continuous output monitoring and alert design

Roadmap Predictions Beyond Summer 2026

Taking the release of Claude Opus 4.7 2026 as a starting point, here’s a summary of observations on how the AI model competition will evolve in the latter half of 2026.

GPT-5.4 scored 57.7% on SWE-bench Pro and Gemini 3.1 Pro scored 54.2%, putting the gap with Opus 4.7 at 6-10 points. The focus is on when OpenAI and Google will close this gap, but based on past trends, Anthropic’s advantage is likely to persist for about six months.

Meanwhile, there are indications that Anthropic itself is advancing research toward the next generation of the Opus line (5.0 series), and further breakthroughs may be announced in the second half of 2026. When making adoption decisions now, it’s rational to invest with the premise that “Opus 4.7 will remain a cutting-edge model for at least six months.”

Conclusion — Why You Should Migrate to Opus 4.7 Now

Claude Opus 4.7 2026 is not just a model update — it’s an event that “redraws the profitability line for agent implementations.” SWE-bench Pro 64.3%, visual accuracy 98.5%, CursorBench 70%, tool errors reduced by 1/3, production task solving 3x. Individually, these numbers may look like “10-point improvements,” but when integrated across agent operations, they hold the potential to halve human supervision costs.

Pricing is identical to Opus 4.6, available on all major cloud channels, and migration primarily involves changing the model ID — with these three conditions met, there’s virtually no reason to hold off on migration. Workloads involving long-running coding, visual tasks, and multi-step processing should consider “switching this week.” Meanwhile, lightweight tasks that Haiku handles well should continue to be delegated separately, maintaining the approach of optimizing model selection by use case.

Starting tomorrow, this series will dive deep into Anthropic’s new product lineup one by one.Claude Design provides a thorough guide to Claude Design.

Further Reading

For detailed specifications of Claude Opus 4.7, API reference, and recommended prompt engineering patterns, please refer to the official Anthropic documentation (https://docs.anthropic.com/). The latest release information and capability updates are regularly published on the Anthropic official news page (https://www.anthropic.com/news). Detailed benchmark breakdowns and evaluation methodologies are available in the official blog release posts.