Agent Teams vs Subagents Complete Comparison 2026 — Choosing Between Two Multi-Agent Patterns

Agent Teams vs Subagents Complete Comparison 2026 — Choosing Between Two Multi-Agent Patterns

Agent Teams Subagents comparison 2026 is essential knowledge for correctly choosing between the two multi-agent patterns in the Claude ecosystem. Agent Teams, released simultaneously with Opus 4.6 in February 2026, attracted attention as a new architecture handling “collaborative tasks” that Subagents alone couldn’t fully address. This article systematically covers architecture, communication patterns, selection criteria, implementation examples, and token costs from the Agent Teams Subagents comparison 2026 perspective.

The biggest benefit of understanding Agent Teams Subagents comparison 2026 is being able to immediately select the optimal design based on the nature of the problem. Using Agent Teams for independent tasks wastes tokens; using Subagents for collaborative tasks degrades result quality. The difference boils down to the presence of a “shared task list,” but the operational differences that derive from it are extensive. Once you internalize Agent Teams Subagents comparison 2026, you’ll be able to evaluate future new patterns on the same axes.

- Agent Teams Overview — New Architecture Released February 2026

- The Decisive Difference from Subagents — Shared Task Lists

- Agent Teams Architecture — Lead Agent and Specialist Agent Groups

- Task State Management — The claim/in-progress/completed Lifecycle

- Peer-to-Peer Communication vs Hub-and-Spoke

- Subagents’ Strengths — Context Isolation and Maximum Parallelism

- Agent Teams’ Strengths — Shared State, Cross-referencing, and Dependency Coordination

- Selection Criteria — Judge by Task Independence

- Implementation Examples — Implementing the Same Task with Both Patterns

- Performance and Token Cost Comparison

- Operational Pitfalls — Common Problems Encountered in Both Patterns

- Debugging and Troubleshooting

- Monitoring Metrics for Production Operations

- Future Extensions — Nested Patterns and Hybrid Operations

- Comparing with Concrete Examples — Code Review vs Web App Development

- Design Anti-patterns — Combinations to Avoid

- Outlook for Late 2026 — The Next Stage of Multi-Agent Systems

- Summary — Maximize Multi-Agent Power with the Right Tool for the Right Job

Agent Teams Overview — New Architecture Released February 2026

Agent Teams is a new multi-agent feature released by Anthropic simultaneously with Claude Opus 4.6 in February 2026. Anthropic had been hinting at the need for “a mechanism for multiple agents to collaboratively progress tasks,” and Agent Teams was designed as the answer.

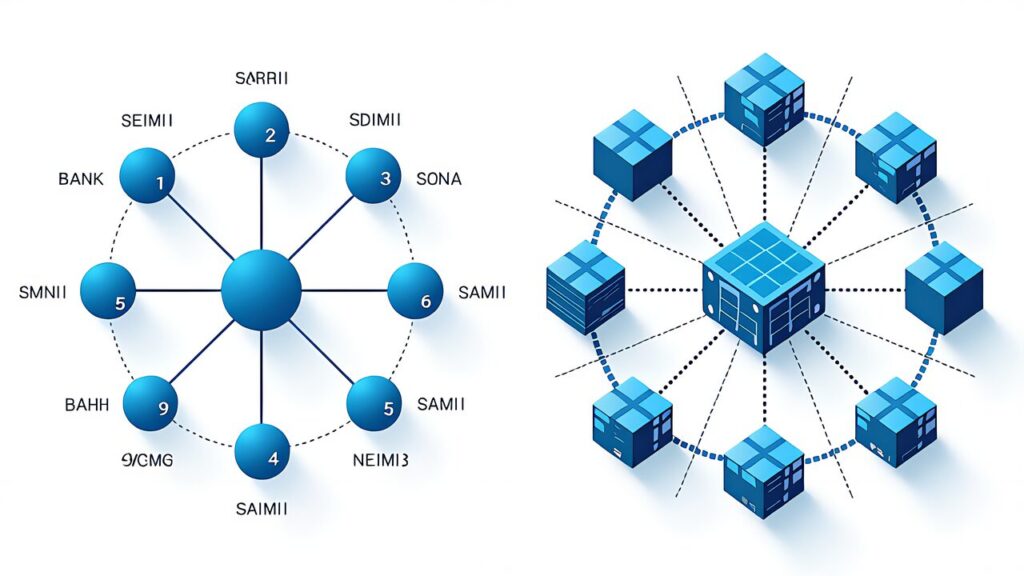

The core characteristic of Agent Teams is the “shared task list (shared state store).” Multiple agents can reference and update the same task list, so every agent instantly knows who’s doing what and which tasks are complete. This is a fundamentally different paradigm from Subagents’ independent execution model.

At initial release, Agent Teams supported leader-worker topology, where a leader agent breaks down tasks and distributes them to worker agents. Workers share progress via the shared task list, and the leader monitors and adjusts as needed.

The first key point in Agent Teams vs Subagents Comparison 2026 is the existence of this “shared state.” While Subagents operate as completely independent parallel executions, Agent Teams function as coordinated execution where all members constantly view the same board.

The Decisive Difference from Subagents — Shared Task Lists

The mechanism of information sharing most succinctly illustrates the difference between Subagents and Agent Teams.

| Item | Subagents | Agent Teams |

|---|---|---|

| Information Sharing | None (fully independent) | Via shared task list |

| Execution Model | Independent parallel execution | Coordinated execution |

| Communication Pattern | Hub-and-Spoke (parent↔child only) | Peer-to-peer capable |

| Intermediate Results | Aggregated by parent; invisible between children | Accessible to all agents |

| Dependent Tasks | Parent controls ordering | Dependencies declared in task list |

| Best Suited For | Independent analysis and research | Complex designs requiring coordination |

When you launch Subagents, each subagent operates in an independent context and returns results to the parent agent upon task completion. The children are not even aware of each other’s existence. This is extremely efficient for independent analysis tasks — for use cases like running code reviews of 5 modules in parallel, Subagents is the fastest option.

In contrast, Agent Teams operates on the premise that each agent reads from and writes to a shared task list. For example, when building a web app, if the frontend agent needs to define the API specification, it can add an “API Specification Definition” task to the shared task list, and the backend agent can claim and work on it. This kind of coordination is impossible with Hub-and-Spoke Subagents.

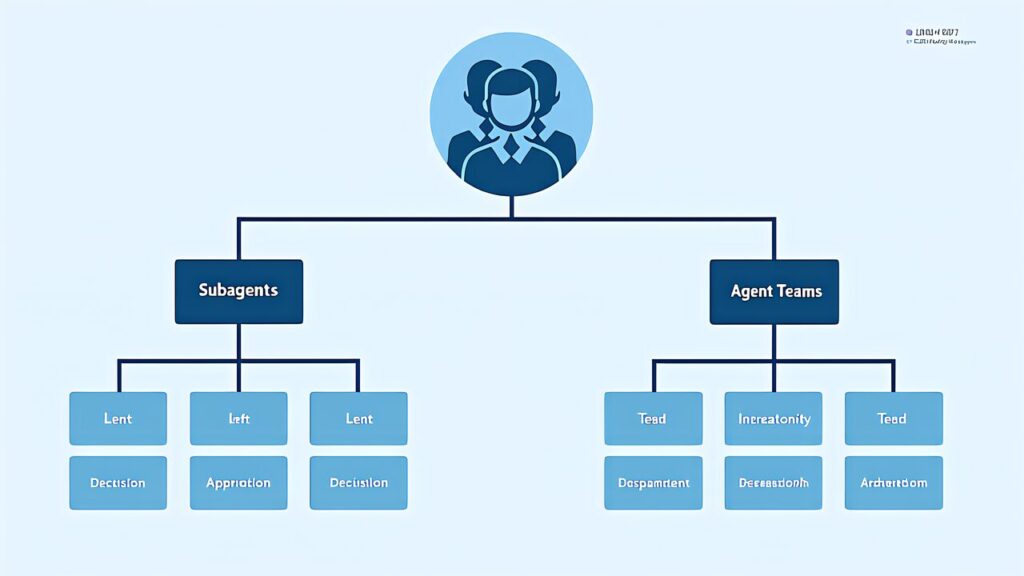

Agent Teams Architecture — Lead Agent and Specialist Agent Groups

The standard architecture of Agent Teams

The lead agent (orchestrator) receives the user’s task and oversees the entire operation. It decomposes tasks and places them on the shared task list, monitors the progress of specialist agents, and handles the final integration of deliverables.

Specialist agent groups each possess expertise in specific domains. For web app development, they might be divided into “Frontend,” “Backend,” “Database,” “Testing,” and “DevOps.” Each agent claims tasks in its domain and writes progress back to the shared task list.

The shared task list (shared state store) is a central state store that all agents can reference and update. Each task records fields such as identifier, description, status (todo/in-progress/done/blocked), assignee, dependencies, and results. Dependencies express ordering relationships between tasks.

The communication layer allows peer-to-peer communication between agents. For example, a frontend-agent can directly message a backend-agent saying “I’d like to finalize this endpoint’s specification.” This kind of direct communication is impossible with Subagents, which always requires going through the parent agent.

Task State Management — The claim/in-progress/completed Lifecycle

Tasks in Agent Teams’ shared task list follow this lifecycle.

First, when the lead agent creates a task, it’s registered with status: todo. Specialist agents monitor todo tasks and execute a claim operation when they find one in their domain. Upon successful claim, the status transitions to in-progress and the agent’s ID is recorded in the assignee field. When multiple agents attempt to claim simultaneously, a distributed locking mechanism ensures only one succeeds.

An agent in the in-progress state executes the actual task. If a dependency on another task is discovered midway, the status transitions to blocked and a new dependency task is created. Once the dependency reaches done, it automatically returns from blocked to in-progress.

When a task is completed, the agent records deliverables in the results field and transitions the status to done. The lead agent detects the done transition and updates overall task progress.

This state management allows Agent Teams to proceed without breakdown even with complex task dependencies. While Subagents required the parent agent to explicitly control ordering, Agent Teams’ task list progresses in a self-organizing manner. This is where the practical difference in Agent Teams vs Subagents Comparison 2026 becomes most apparent.

Peer-to-Peer Communication vs Hub-and-Spoke

The difference in communication patterns fundamentally determines multi-agent design.

In Subagents’ Hub-and-Spoke model, all communication goes through the parent agent. When child A wants to pass information to child B, it requires two hops: child A → parent → child B, and the parent can only process interactions with each child sequentially. This makes the parent a bottleneck for tasks requiring close coordination between children, eliminating parallelism.

In Agent Teams’ peer-to-peer model, agents can exchange messages directly. The frontend-agent and backend-agent can discuss API specifications directly while writing code in parallel. The lead agent monitors progress but doesn’t need to relay all communications.

However, peer-to-peer communication carries risks. Token consumption increases with more communications, and there’s a risk of discussions diverging without reaching conclusions — the “committee syndrome.” In Agent Teams vs Subagents Comparison 2026, it’s recommended to build arbitration rules into the lead agent, such as “the lead agent decides if a discussion doesn’t resolve within a set time.”

Subagents’ Strengths — Context Isolation and Maximum Parallelism

So far we’ve focused on Agent Teams’ advantages, but Subagents also have clear strengths.

Independent contexts completely prevent context contamination. Since each subagent has no knowledge of what other subagents are seeing, unintended information leakage or misunderstandings cannot occur. When running a security review and performance review simultaneously, the fact that they don’t influence each other is a characteristic unique to Subagents.

Isolated execution maximizes parallelism. While Agent Teams’ shared task list is convenient, it incurs synchronization overhead. For purely independent tasks (such as static analysis of 5 files), Subagents complete faster and at lower cost.

The model of returning only final results simplifies context management for the parent agent. Subagents output only aggregated results, and detailed intermediate steps don’t flow into the parent. This conserves the parent’s context window.

Agent Teams’ Strengths — Shared State, Cross-referencing, and Dependency Coordination

On the other hand, Agent Teams’ strengths lie in coordination for complex tasks.

Cross-referencing through shared state keeps multiple agents’ deliverables consistent. It prevents the typical failure where the API specification decided by the frontend team diverges from the backend team’s implementation. In Agent Teams, both parties view the same task list, so specification changes propagate immediately.

Automatic coordination of dependent tasks eliminates the need for humans (or the lead agent) to give step-by-step instructions for complex ordering. If you define dependencies like “implement authorization logic after the authentication API is complete” in the task definition, agents autonomously maintain the correct order.

The ability to handle dynamic task additions is also significant. When new work is discovered during task execution, an agent can add new tasks to the shared task list. With Subagents, the parent would need to launch new subagents again, incurring context handoff costs.

Selection Criteria — Judge by Task Independence

The practical conclusion of Agent Teams vs Subagents Comparison 2026 is the principle of judging by task independence.

Scenarios Where Subagents Should Be Chosen

- Independently performing static analysis on 5 files

- Running security reviews of 10 libraries in parallel

- Evaluating the same code from different perspectives (security/performance/readability)

- Independently verifying facts from multiple sources

- Running multiple independent test suites in parallel

All of these are tasks where “children don’t need to coordinate with each other.” Subagents’ independent execution model delivers maximum efficiency.

Scenarios Where Agent Teams Should Be Chosen

- Web app design spanning frontend/backend/database

- Cross-phase projects from specification → implementation → testing

- Refactoring across multiple modules

- Investigations requiring response to dynamically emerging tasks

- Work that requires cross-referencing intermediate deliverables

These are tasks where “coordination between children is fundamentally necessary.” Consistency cannot be maintained without a shared task list.

When In Doubt

Start with Subagents first, and if the parent agent becomes overwhelmed relaying information, migrate to Agent Teams. Agent Teams has higher initial costs, making it unsuitable for simple tasks. The ROI of Agent Teams vs Subagents Comparison 2026 is determined by task complexity.

Implementation Examples — Implementing the Same Task with Both Patterns

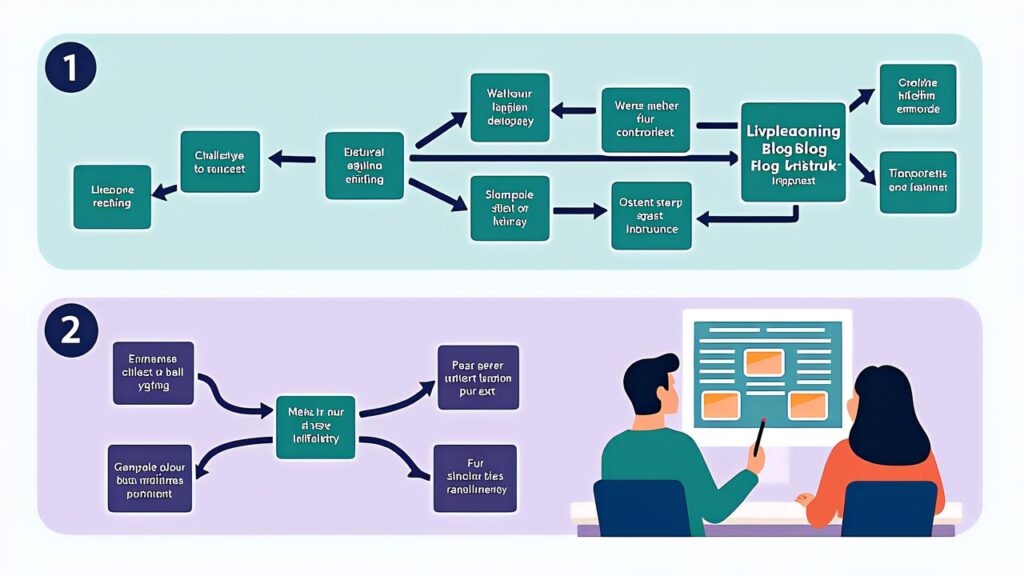

Let’s compare both patterns using “creating a technical blog post” as an example.

Subagents Pattern

The lead agent launches the following subagents sequentially.

- Research agent: Collects information about the topic and returns results

- Outline agent: Receives research results and returns a structure proposal

- Writing agent: Receives the outline and returns the body text

- Review agent: Receives the body text and returns improvement suggestions

In this case, each agent receives only the output from the previous stage as input. While it’s serial execution, the parent receives output at each stage and launches the next, making it easy for humans to intervene midway. The drawback is that if a research gap is discovered during writing, additional research cannot be dynamically added.

Agent Teams Pattern

The lead agent places the following on the shared task list.

- T1: Research (3 main perspectives)

- T2: Outline creation (depends on T1)

- T3: Body writing (depends on T2)

- T4: Review (depends on T3)

Each specialist agent autonomously claims tasks. If the writing agent determines “there’s not enough data” midway, it adds T1.5: Additional Research to the task list, and the research agent restarts. The review agent begins after T3 is complete.

In the Agent Teams pattern, dynamic task addition and autonomous coordination work effectively. However, there is overhead from task list management, and for simple serial writing, Subagents is more lightweight.

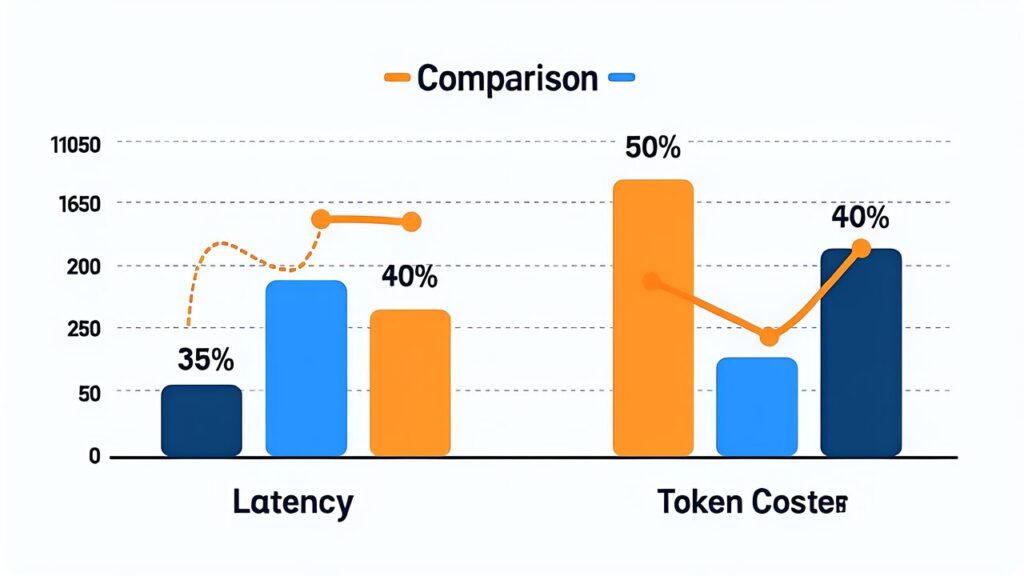

Performance and Token Cost Comparison

As a quantitative perspective in Agent Teams vs Subagents Comparison 2026, let’s examine performance and cost.

| Metric | Subagents | Agent Teams |

|---|---|---|

| Parallelism | Maximum (fully independent) | Moderate (synchronization points exist) |

| Token Consumption | Low (no intermediate state) | Medium to High (shared state maintenance) |

| Latency | Short (parallel completion) | Medium (coordination waits occur) |

| Initial Setup Cost | Low | High (task list design) |

| Operational Traceability | Low (child details invisible) | High (task list visible) |

Using Subagents for independent tasks can cut token costs roughly in half. Conversely, forcing Subagents for coordination tasks quickly inflates the parent’s context, and the resulting do-overs make the total cost higher than Agent Teams.

Operational traceability overwhelmingly favors Agent Teams. A glance at the shared task list shows what stage you’re at and where things are stuck. For long-running large projects, this visibility significantly affects operational confidence.

Operational Pitfalls — Common Problems Encountered in Both Patterns

When running Agent Teams vs Subagents Comparison 2026 in practice, you’ll encounter several typical pitfalls. Knowing them in advance can dramatically shorten the trial-and-error period of the first few weeks.

The first pitfall with Subagents is parent agent context bloat. When the parent aggregates results from all subagents, their entire output flows into the parent’s context. Five or so is manageable, but beyond ten, the parent becomes bloated and judgment quality degrades. Effective countermeasures include designing subagents to return summarized results, or using hierarchical structures (parent → intermediate leaders → subagent groups).

The second pitfall with Subagents is redundant work between subagents. Due to independent execution, wasteful situations occur where 5 subagents run the same web search in parallel. The preferred design is to pre-fetch common information in the parent agent and pass it as arguments when launching subagents.

The first pitfall with Agent Teams is excessive task granularity. Over-enthusiastic design that decomposes into 100+ tasks causes agents to struggle with task selection, slowing overall progress. A practical rule is to keep each task at a granularity that “one agent can complete in 30 minutes to a few hours.”

The second pitfall with Agent Teams is never-ending discussions. Since peer-to-peer communication between agents is permitted, endless debates can occur on topics where no consensus is reached. This can be avoided by building a rule into the lead agent: “if no conclusion is reached after N round trips, the lead agent decides.”

The third pitfall with Agent Teams is task list state management breakdown. When multiple agents attempt simultaneous claims, or when blocked task dependency resolution fails, the task list enters an inconsistent state. It’s recommended to build in a “health check” process where the lead agent periodically checks task list integrity and repairs inconsistencies.

Debugging and Troubleshooting

When problems occur in multi-agent operations, identifying the cause is harder than with a single agent. Let’s organize the debugging approaches for Agent Teams vs Subagents Comparison 2026.

For debugging Subagents, record each subagent’s input and output individually. If you can identify which subagent produced unexpected results, the cause is localized. Logs should include the arguments passed to the subagent, tools used, and final output.

For debugging Agent Teams, track the shared task list history in timeline format. View chronologically which agent claimed each task, how states transitioned, and where blockages occurred. By reconstructing the context at the moment a problem occurs, you can identify design flaws or instruction ambiguities.

A debugging technique common to both patterns is creating a “minimal reproduction case.” Extract only the essential parts of the problem from the production task and reproduce with minimal configuration. This helps distinguish whether the issue is specific to multi-agent operations or due to individual agent capability limitations.

Gradually simplifying agent instructions (system prompts) is also effective. As you strip away complex instructions, the problem disappears at some point. The instruction removed at that moment is likely the cause of the problem.

Monitoring Metrics for Production Operations

When running Agent Teams vs Subagents Comparison 2026 in production, continuously monitoring the following metrics enables early detection of quality degradation and cost increases.

Task completion rate is the percentage of launched tasks that complete successfully. If it drops below 80%, the design needs revision. Classifying failure patterns helps determine whether the issue is agent capability limitations or task design flaws.

Average completion time is the time from task launch to completion. Track daily and weekly to observe trend changes. A sharp increase suggests model-side changes or increased task complexity.

Token consumption is the average number of tokens per task. Comparing between Subagents and Agent Teams provides data for judging which is more cost-efficient.

Inter-agent communication count is a metric specific to Agent Teams. Excessive communication indicates discussion divergence, while insufficient communication indicates information sharing failures. The appropriate range is determined empirically based on task complexity.

Human intervention rate is the percentage of cases requiring human intervention. If this is high, autonomy is insufficient; if too low, there’s a risk of runaway behavior. Around 10-20% is a practical balance.

Future Extensions — Nested Patterns and Hybrid Operations

As an evolution of Agent Teams vs Subagents Comparison 2026, nested patterns combining both are experimentally gaining traction.

A typical nested pattern is a configuration where each specialist agent in Agent Teams internally launches Subagents. For example, a frontend specialist agent runs “static analysis of modules A/B/C” as parallel Subagents. The specialist agent receives each Subagent’s results and writes aggregated results to the Agent Teams shared task list.

The advantage of this configuration is the ability to use the optimal pattern based on task characteristics. The broad framework requiring coordination uses Agent Teams, while parallel processing of independent tasks uses Subagents — this division of roles functions naturally.

The drawback is increased design complexity. Without constantly tracking who is operating under which pattern, debugging becomes difficult. In practice, it’s recommended to avoid nesting deeper than 3 levels and limit to 2 levels.

Looking further ahead, “hybrid operations” that dynamically switch between Agent Teams and Subagents based on task characteristics will also become practical. The lead agent judges task independence — if independence is high, use Subagents; if coordination is needed, use Agent Teams — implementing such automatic determination logic. The knowledge from Agent Teams vs Subagents Comparison 2026 is also important as the foundation for such advanced operational design.

Comparing with Concrete Examples — Code Review vs Web App Development

Let’s compare the selection criteria of Agent Teams vs Subagents Comparison 2026 with two concrete examples.

Example 1 — Multi-file Code Review

Consider a task of reviewing 10 Python files from 3 perspectives: security, performance, and readability.

When implementing with Subagents, the parent agent launches 10 subagents. Each subagent handles one file and runs the 3-perspective review in parallel. No communication between children is needed — they can operate completely independently. Results are aggregated by the parent, generating a final report. Execution time equals “review time for one file” (due to parallel execution).

When implementing with Agent Teams, it would work, but the shared task list management overhead is wasted. Ten tasks line up on the list, but there are no dependencies and no need for cross-referencing. Subagents is clearly more efficient.

For this task, Subagents is the optimal solution.

Example 2 — Web App Development Project

Consider a task of developing a web app with authentication from scratch. Frontend (React), Backend (FastAPI), Database (PostgreSQL), Testing, and Infrastructure configuration are required.

When implementing with Subagents, the parent agent needs to launch 5 subagents sequentially. When the frontend team wants to define the API specification, they need to query the backend team through the parent, creating complex coordination within the parent’s context. With many dependent tasks, the parent becomes consumed with order control and becomes a bottleneck.

When implementing with Agent Teams, task dependencies can be declared in the shared task list. Dependency chains like “Auth API Design” → “Auth API Implementation” → “Login Screen Implementation” are automatically order-controlled. Frontend and backend teams can finalize API specifications through direct communication. Even if new tasks are discovered midway (e.g., OAuth support), they’re autonomously processed once added to the task list.

For this task, Agent Teams is the optimal solution.

Decision Framework

Let’s organize the decision framework derived from the above two examples.

| Decision Criteria | Suited for Subagents | Suited for Agent Teams |

|---|---|---|

| Task Independence | High | Low |

| Inter-task Dependencies | Almost none | Many |

| Child-to-child Communication | Not needed | Needed |

| Dynamic Task Addition | Not needed | Needed |

| Parallelism Priority | Most important | Moderate |

| Deliverable Integration | Simple aggregation | Cross-referencing |

When in doubt, apply the 6 criteria above. If 4 or more lean toward the Subagents side, choose Subagents; if they lean toward Agent Teams, choose Agent Teams.

Design Anti-patterns — Combinations to Avoid

In Agent Teams vs Subagents Comparison 2026, there are several anti-patterns to avoid in implementation.

Anti-pattern 1 — Forcing Coordination Tasks into Subagents

Attempting to process tasks with low independence using Subagents exhausts the parent agent with information relay. The parent’s context bloats, judgment quality drops, and rework eventually occurs. In such cases, you should straightforwardly migrate to Agent Teams.

Anti-pattern 2 — Processing Independent Tasks with Agent Teams

Processing independent tasks with Agent Teams wastes the overhead of shared task list management. Consuming 2-3x the tokens of Subagents worsens ROI. Execute simple parallel tasks lightweight with Subagents.

Anti-pattern 3 — Concentrating All Authority in the Lead Agent

Over-concentrating functionality in the Agent Teams lead agent turns the lead agent itself into a bottleneck. The lead agent should focus on “task decomposition, progress monitoring, and conclusion integration,” fully delegating actual work to specialist agents.

Anti-pattern 4 — Excessive Agent Count

Increasing the number of agents doesn’t infinitely increase parallelism. Beyond 10 agents, coordination costs often outweigh parallelism benefits. 5-7 agents is a practical upper limit guideline.

Anti-pattern 5 — Excessive Permissions

Granting overly broad permissions to each agent increases the risk of unexpected side effects. Adhere to the principle of least privilege, limiting to the minimum necessary tool access.

Outlook for Late 2026 — The Next Stage of Multi-Agent Systems

Based on the current state of Agent Teams vs Subagents Comparison 2026, let’s outline the outlook for late 2026 and beyond.

Dynamic composition capabilities for Agent Teams are expected to be implemented. Agent configurations will be dynamically changeable at runtime based on task characteristics. Designs that auto-adjust to 3 agents for simple tasks and 7 agents for complex tasks will become practical.

Formal communication protocols between agents will also see progress in standardization. Currently, unstructured text communication is mainstream, but once structured messages (JSON-format requests/responses) are standardized, misunderstandings between agents will decrease and coordination quality will improve.

Long-term tasks spanning multiple sessions are also expected to become manageable. Designs where Agent Teams can suspend and resume large-scale projects spanning several days will become possible.

On the Subagents side, hierarchical structures (subagents launching grandchild agents) may also become standardized. This would enable more complex task decomposition.

Summary — Maximize Multi-Agent Power with the Right Tool for the Right Job

The essence of Agent Teams vs Subagents Comparison 2026 boils down to one point: “assess task independence.” Use Subagents for independent tasks, Agent Teams for tasks requiring coordination, and start with Subagents when in doubt.

Looking toward late 2026, integration and interoperability between both patterns is also expected to advance. For example, a “nested pattern” where some specialist agents within Agent Teams internally launch Subagents is already being experimented with by some users. The knowledge from Agent Teams vs Subagents Comparison 2026 also serves as the foundation for future pattern extensions.

The previous Agent Skills Practical Guide 2026 combined with this article allows you to approach AI workflow design from two axes: skill-building within a single agent and coordination between multiple agents. For those wanting to learn the fundamentals of multi-agent design, also refer to CCA Domain 1 Agentic Architecture Part 1. Next, we’ll cover Claude Cowork Practical Guide 2026, exploring how to leverage the Cowork feature that debuted in the Desktop version.

Official documentation is available at docs.anthropic.com and the Claude Code official repository for the latest information. Since Agent Teams is scheduled for continuous feature expansion throughout 2026, we recommend tracking the latest specifications from official sources.